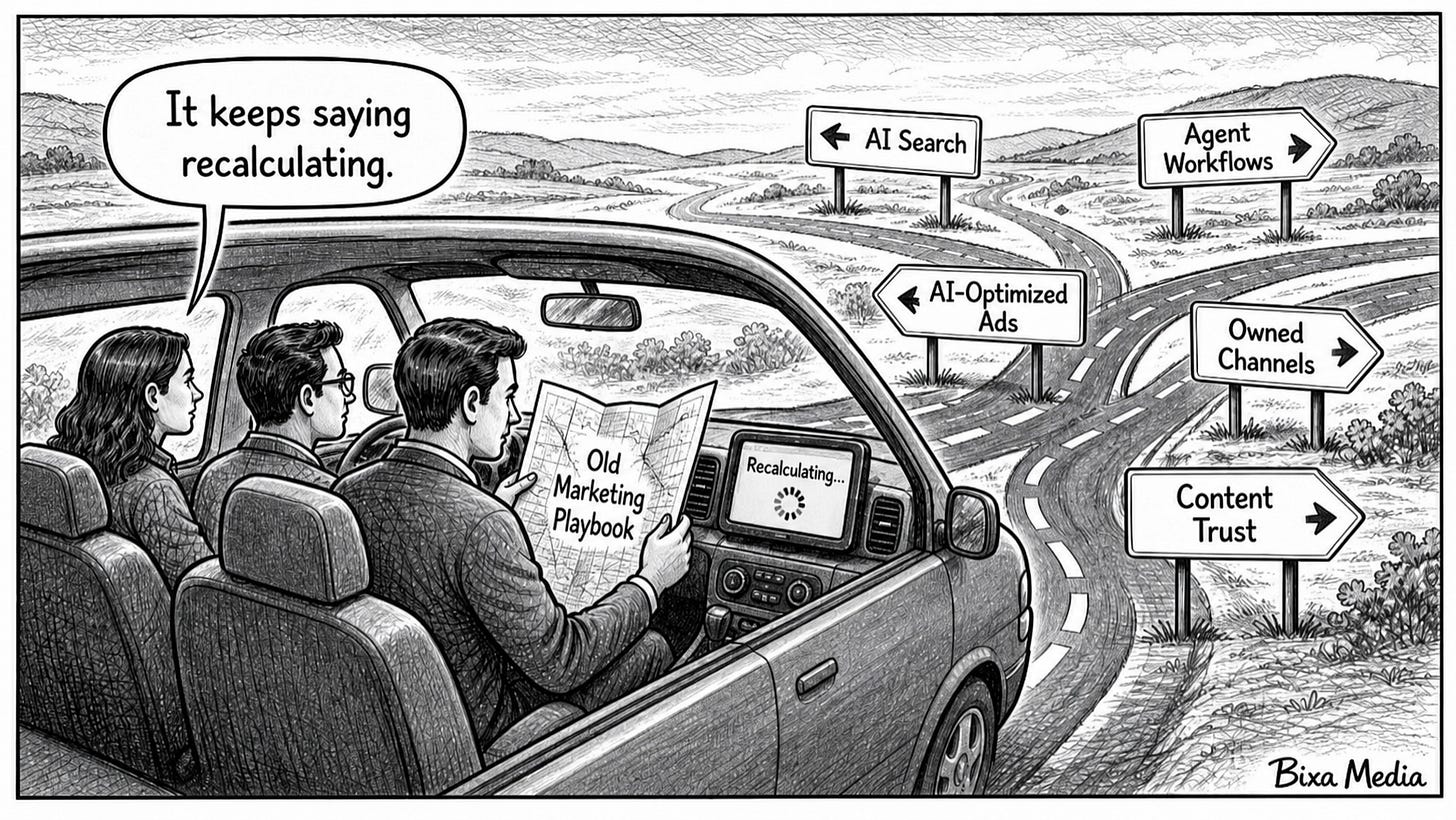

What's Changed in AI (for Marketers)

May 5, 2026 Issue - The Map Changed. Most Teams Are Still Using the Old One.

AI capabilities do not wait for organizational readiness. They ship, they integrate, and they start affecting how your tools work whether your team has planned for them or not.

That gap - between what the stack can now do and how most marketing teams actually operate - is the story of 2026 so far. And it is getting harder to ignore.

This issue covers where the cracks are showing up and what to do about them.

🔥 High Impact

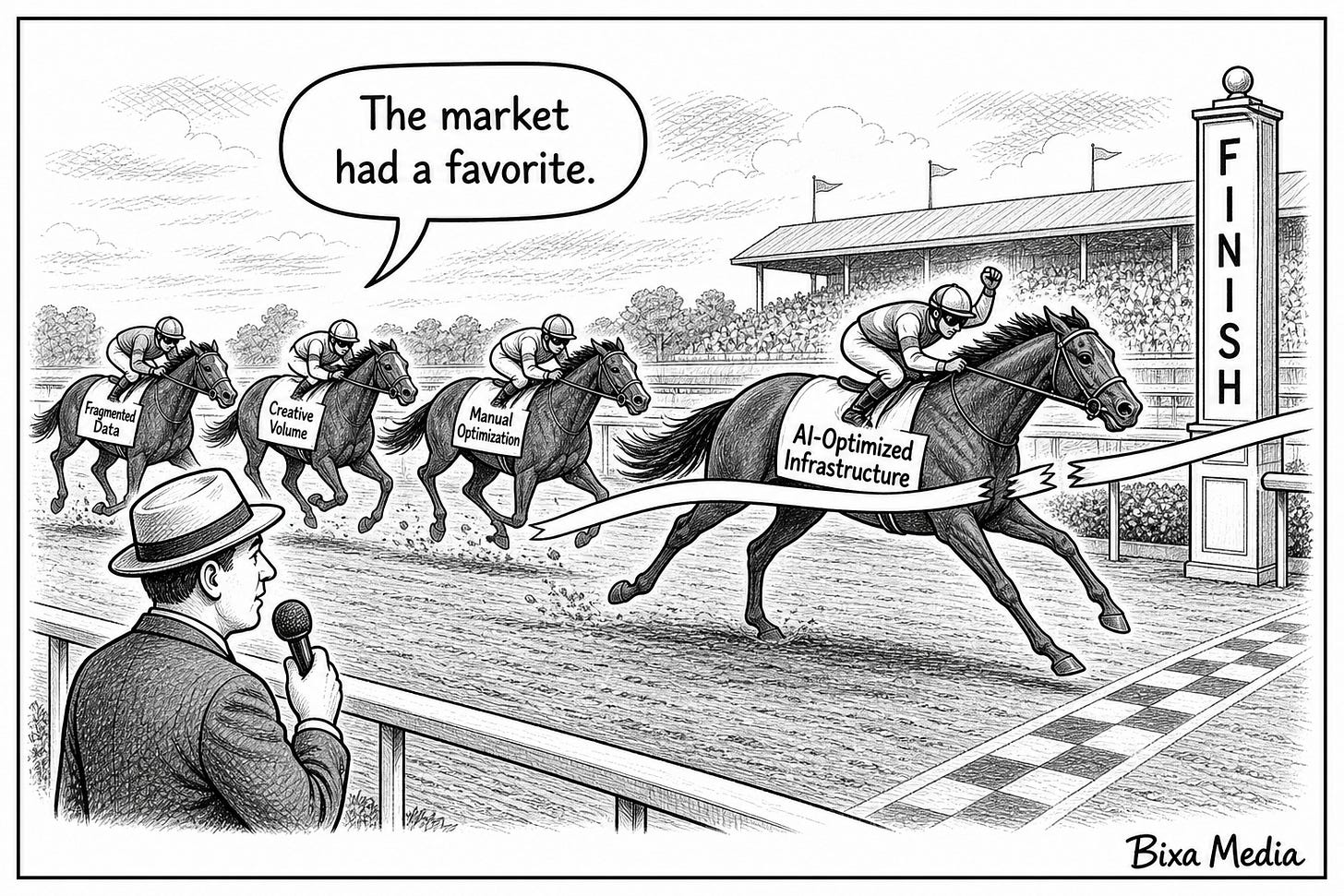

The Ad Market Voted. AI-Optimized Infrastructure Won.

What’s Changed

For the first time in digital advertising history, Meta is projected to overtake Google in global ad revenue. According to Emarketer’s latest forecast, Meta will generate $243.46 billion in net worldwide ad revenue in 2026, edging past Google’s $239.54 billion. In share terms, Meta is expected to capture 26.8% of global digital ad spend compared to Google’s 26.4% - the first time Google has lost the top spot since digital advertising became an industry.

Q1 2026 earnings confirmed the momentum. Meta’s ad revenue jumped 33% year over year to $55 billion. Google grew 15% to $77.25 billion. Both numbers are strong, but the growth rate gap tells the real story. Meta is accelerating at 24.1% for the full year. Google is holding at 11.9%.

In the last issue, we covered Muse Spark - Meta’s first proprietary model from Superintelligence Labs, now running across Facebook, Instagram, WhatsApp, Messenger, and Ray-Ban glasses. The ad revenue story is the market’s verdict on that infrastructure investment. Meta built Muse Spark to improve how its ads perform across three billion users. The revenue growth says it’s working.

Why It Matters

Google built its dominance on search intent. When someone searches, they tell you exactly what they want, and you pay to show up at that moment. That model worked for two decades and shaped how most marketing teams think about paid media.

Meta built its dominance on behavior. It knows what people do, what they respond to, and how to optimize delivery in real time across a unified intelligence layer. Muse Spark tightened that loop further. The result is an ad platform that doesn’t wait for someone to express intent… it predicts it.

The market is rewarding prediction over declaration. That’s the shift many media plans haven’t accounted for.

What This Means

AI-optimized ad delivery is now the performance benchmark, not the exception. Meta’s growth isn’t happening because more advertisers showed up. It’s happening because the platform is converting existing spend more efficiently. Advantage+ campaigns, AI-driven creative optimization, and Muse Spark-powered targeting are compounding. Teams running manual campaign structures against this infrastructure are competing on uneven ground.

The relationship between intent and performance is being redefined. Search captured intent at the moment of expression. Meta’s AI infrastructure captures intent before it’s expressed, by modeling behavior across three billion users. That changes where high-value attention lives… and where budgets should follow.

This is a structural shift, not a quarterly blip. Meta’s growth rate is more than double Google’s. Emarketer’s forecast covers the full year. Morgan Stanley projected Meta’s quarterly ad revenue would surpass Google’s search ads specifically by Q2 2026. One of these trajectories is accelerating. The other is holding steady. Ad budgets built on 2024 assumptions are already misaligned.

What To Do

If your paid media budget is still heavily weighted toward search, this data warrants a closer look. Not necessarily a permanent reallocation, but a structured experiment. Run a controlled test that shifts a portion of search budget toward Meta’s AI-driven formats, specifically Advantage+ campaigns where the platform’s optimization has the most room to work. Set a clear measurement window, define what success looks like before you start, and let the data make the case rather than the headline.

If you are already running Meta campaigns, revisit how much of your spend is in manual versus AI-optimized structures. The performance gap between the two is widening as Muse Spark improves the platform’s ability to match creative to context. Teams still running legacy campaign structures are leaving performance on the table.

If you manage budgets for clients, this is a conversation worth having proactively. The shift is documented, the numbers are public, and waiting for clients to raise it puts you in a reactive position on a story that is only going to get louder.

Ignore This If

Paid media is not part of your marketing mix and you have no plans to test it.

Sources

Emarketer - Meta to Surpass Google in Digital Ad Revenues for First Time Ever (April 2026)

Marketing Dive - Meta and Google ad revenues soar thanks to AI, but big picture is blurry (April 30, 2026)

Marketing Dive - Meta to shoot past Google in digital ad revenue for first time (April 2026)

Search Engine Land - Meta is on track to overtake Google in global ad revenue for the first time (April 2026)

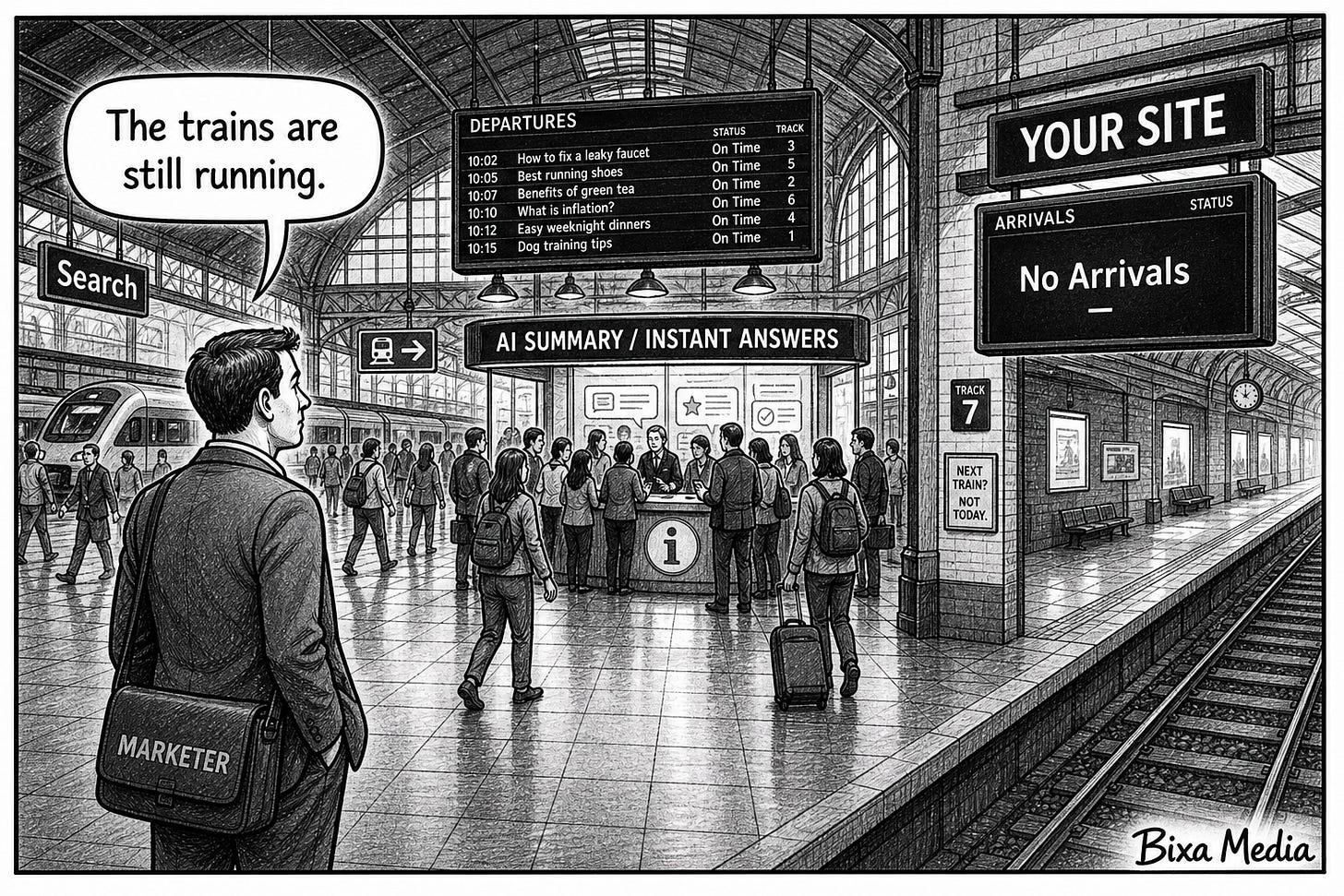

Search Didn't Die. It Just Stopped Sending People to Your Site.

What’s Changed

In the April 6 issue, we covered the directional shift: AI Overviews were reducing clicks, zero-click searches were becoming the norm, and search budgets were starting to move toward GEO. Since then, the data has gotten significantly harder.

B2B organic traffic is down 33.6% year over year, according to Cognism’s Inside Inbound 2026 report. In Google’s AI Mode specifically, the zero-click rate reaches 93%. The overlap between pages that rank in the top 10 and pages that get cited in AI Overviews has collapsed from 75% in mid-2025 to between 17% and 38% by early 2026. Organic CTR drops 61% on queries where an AI Overview appears, according to Seer Interactive’s analysis of 25 million impressions across 42 organizations.

The overlap between pages that rank in the top 10 and pages that get cited in AI Overviews has collapsed from 75% in mid-2025 to between 17% and 38% by early 2026. That means ranking well no longer predicts whether your content gets cited in AI answers. They have become two separate problems.

One additional data point worth flagging: publishers who blocked AI crawlers to protect their content saw an average 7% decline in human browsing traffic within weeks of implementation. Blocking AI access didn’t protect traffic. It reduced it.

Why It Matters

The mechanism is simple and it’s not going to reverse. AI Overviews answer the query before the user has any reason to click. Your content informed the answer. You didn’t get the visit.

What makes this particularly difficult for marketing teams is that the standard signals of success are still green. Rankings are holding. Impressions are flat or rising. The traffic isn’t there. Teams that are waiting for a clear signal that something is wrong may already be six to twelve months into a decline they haven’t connected to this cause yet.

And because ranking and citation are now largely separate problems, teams that have invested heavily in SEO are not automatically protected. The search rankings playbook and the AI search visibility playbook are diverging fast.

What This Means

Don’t block AI crawlers, the data shows it hurts human traffic too. Publishers who restricted access to bots like GPTBot and ClaudeBot saw human browsing traffic drop 7% within weeks. The instinct to protect content by blocking AI access is backfiring. If AI systems cannot read your content, they cannot cite it. Reduced citation is now correlating with reduced human traffic, not just bot traffic.

Owned channels are becoming more valuable, not less. As search delivers less reliable traffic, the channels you control - email and SMS lists, direct audiences, community - absorb more of the acquisition burden. Teams that have been treating owned channels as secondary to search are now in a more exposed position.

Generic content is the most exposed. AI systems cite sources that demonstrate real expertise, original data, and clear structure. Content that ranked because of volume and backlinks - thin informational pages built around keyword density rather than genuine depth - is losing citation ground fast. The sites that built their traffic on surface-level coverage of broad topics are seeing the steepest declines.

What To Do

Start with your highest-traffic informational pages - the ones built to capture top-of-funnel search queries. These are the pages most likely to be summarized by AI Overviews rather than clicked through. Restructure them to be citable: lead with a direct, quotable answer in the first paragraph, use clear subheadings that match user questions, and include original data or specific expertise signals that give AI systems a reason to attribute the source.

Audit which channels are currently carrying your acquisition load and how much of that depends on organic search. If search is carrying significant acquisition weight for your business, the exposure is real enough to warrant accelerating investment in owned channels now rather than waiting for the traffic decline to force the conversation.

Ignore This If

Organic search is not a meaningful source of traffic or leads for your business.

Sources

Cognism - Inside Inbound 2026 (2026)

Seer Interactive - AI Overviews Impact on Organic CTR (April 2026)

MarketingProfs - AI Update, May 1, 2026: AI News and Views From the Past Week (May 1, 2026)

Mersel AI - Why Is Organic Traffic Declining in 2026? (2026)

Demand Local - AI Search Organic Traffic Decline Agencies Face: A 2026 Response Playbook (April 2026)

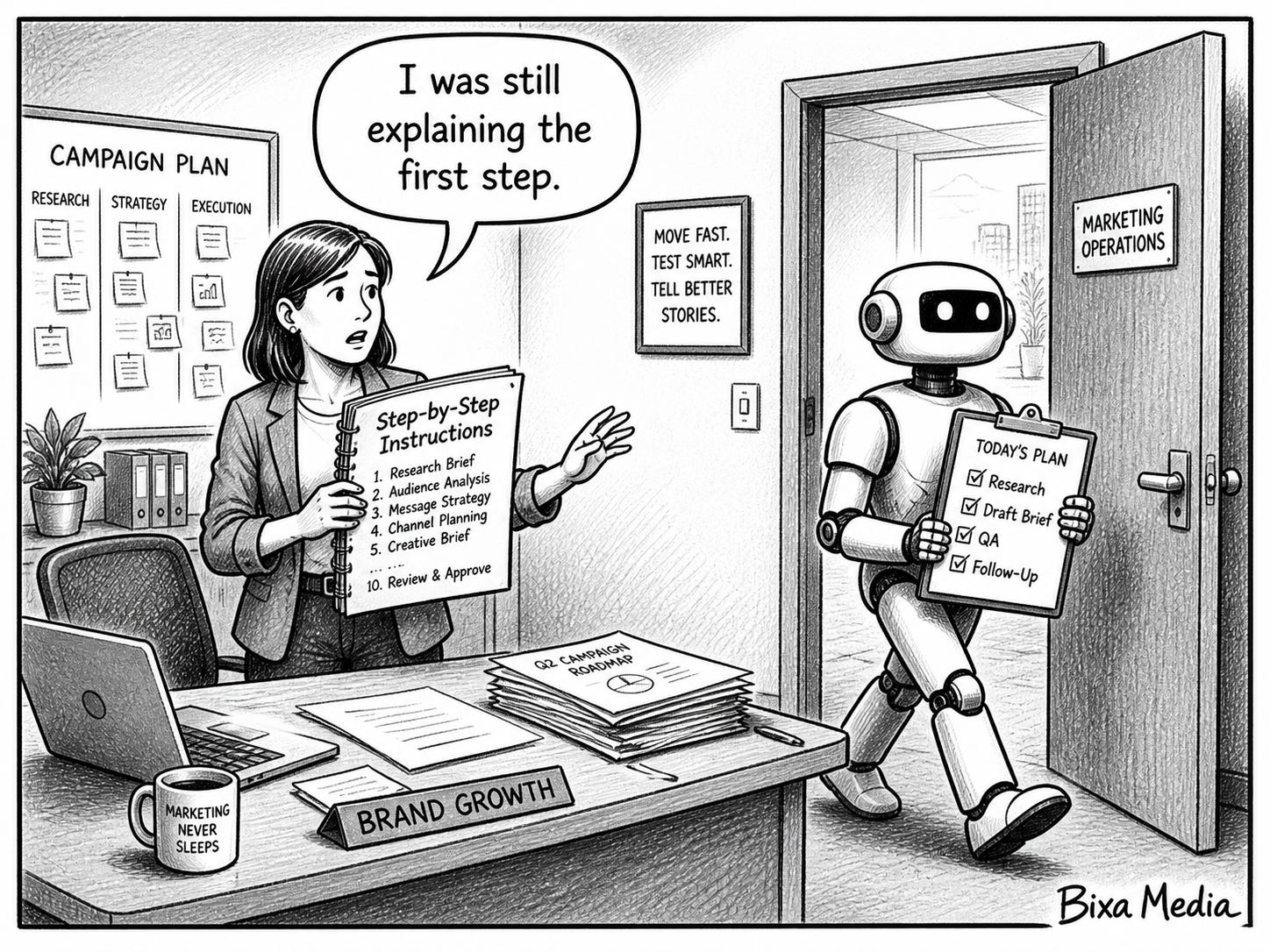

GPT-5.5 Didn't Just Get Smarter. It Got More Independent.

What’s Changed

On April 23, 2026, OpenAI released GPT-5.5 - internally codenamed “Spud” - six weeks after GPT-5.4. If you read our coverage of GPT-5.4, you can think of this as the moment the model stopped being a better assistant and started being a capable worker. OpenAI’s own framing makes the shift explicit: you can now give GPT-5.5 a messy, multi-part task and trust it to plan, use tools, check its work, navigate through ambiguity, and keep going… without managing every step.

The model launched with significant capability gains in agentic coding, computer use, knowledge work, and multi-step task execution. It handles a one million token context window, matches GPT-5.4’s per-token latency, and runs more efficiently, completing tasks with fewer tokens and fewer retries. GPT-5.5 and GPT-5.5 Pro are available to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex. API access opened April 24.

OpenAI’s internal teams are already using it in real workflows. OpenAI’s Finance team used GPT-5.5 to review 24,771 K-1 tax forms totaling 71,637 pages, accelerating the task by two weeks compared to the prior year. A Go-to-Market employee automated generating weekly business reports, saving five to ten hours a week. More than 85% of OpenAI’s company uses Codex weekly across functions including marketing, finance, communications, and product management.

Why It Matters

GPT-5.5 is the first OpenAI flagship model positioned primarily as an agent runtime rather than a chat model. Previous releases led with quality metrics - reasoning scores, benchmark results, token-level evaluations. GPT-5.5 leads with outcomes: it completes the task, it uses your tools, it doesn’t need you to manage every step.

That is a different product than what most marketing teams are currently using. The majority of teams interacting with ChatGPT today are still in prompt-and-response mode - asking questions, reviewing outputs, adjusting and asking again. GPT-5.5 is designed for something different: give it a goal, connect it to your tools, and let it work.

The gap between teams using AI transactionally and teams using it operationally just got more expensive to ignore. Teams with repeatable workflows and connected systems get compounding leverage from a model like this. Teams still using it for one-off tasks get incrementally better outputs from a tool that is now capable of significantly more.

What This Means

The prompt-and-response model of working with AI is becoming the floor, not the ceiling. GPT-5.5 is designed to interpret intent and execute across multiple steps without constant direction. Teams that have optimized their prompts but haven’t built workflows around AI are getting diminishing returns from their effort. The leverage has moved upstream to system design, not prompt craft.

The tools you already use are about to get significantly more autonomous. GPT-5.5 flows downstream into ad platforms, CRMs, and content tools built on OpenAI’s infrastructure. The automation those tools offer isn’t static - it improves as the underlying model does. Teams don’t need to do anything differently to benefit, but they do need to understand what’s changing underneath them.

Computer use and multi-step task execution are now mainstream capabilities. GPT-5.5 can operate software, navigate interfaces, and execute tasks across applications without human handholding at each step. This is no longer a research feature. It’s available to paid ChatGPT subscribers today, starting at the Plus tier.

What To Do

Start by auditing which workflows in your marketing stack involve repetitive, multi-step tasks that currently require a human to move between tools or stages. Campaign briefing, performance reporting, competitive monitoring, and content QA are strong starting candidates - they have clear inputs, defined outputs, and enough repetition to make automation worthwhile.

Pick one and run a structured pilot with GPT-5.5. The goal is not to automate everything at once…it’s to build operational experience with a model that works differently than what most teams are used to. The teams building that experience now will have a meaningful advantage when agentic workflows become standard practice rather than early adoption.

If your team is still using ChatGPT primarily for drafting and ideation, spend time exploring what Workspace Agents and Codex now support. The gap between how most marketing teams use the platform and what it can currently do is significant, and it is only going to widen as GPT-5.5 becomes the default intelligence layer across OpenAI’s product suite.

Ignore This If

You are not currently using ChatGPT or OpenAI-powered tools in any part of your marketing workflow.

Sources

OpenAI - Introducing GPT-5.5 (April 23, 2026)

Fortune - OpenAI releases GPT-5.5 (April 23, 2026)

NVIDIA Blog - OpenAI’s New GPT-5.5 Powers Codex on NVIDIA Infrastructure (April 23, 2026)

MacRumors - OpenAI Debuts GPT-5.5 Claiming Agentic Coding and Research Gains (April 24, 2026)

⚠️ Emerging Shifts

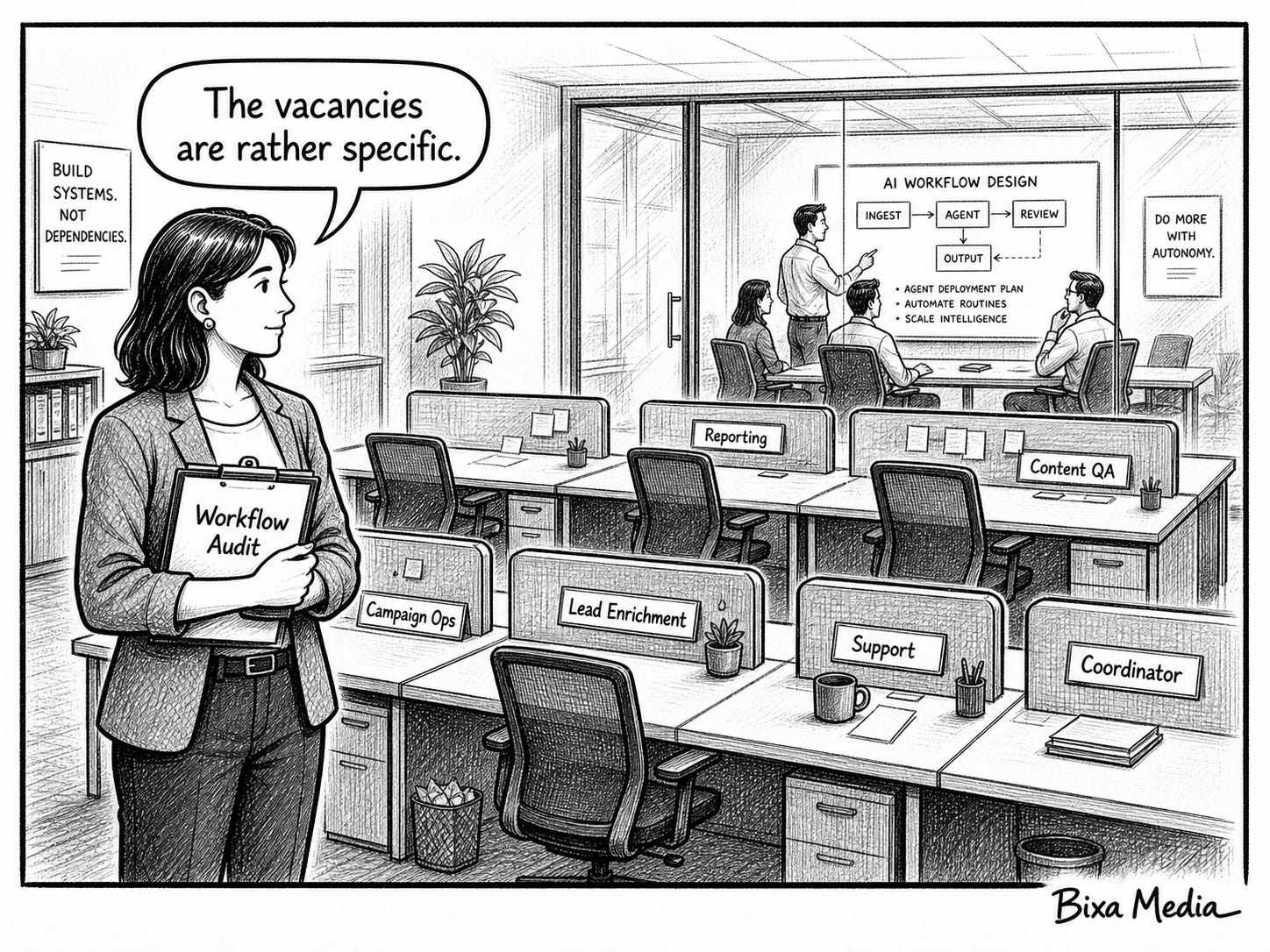

The Roles Being Cut First Tell You Everything About What Comes Next.

What’s Changed

The tech layoff wave of 2026 is the largest concentrated workforce reduction in a decade. Oracle eliminated an estimated 20,000 to 30,000 positions. Amazon cut approximately 16,000 corporate roles in Q1. Meta announced 8,000 cuts - 10% of its workforce - effective May 20, with recruiting and HR absorbing 35 to 40% of those reductions. Microsoft offered voluntary retirement to 8,750 U.S. employees. Salesforce eliminated 4,000 customer support roles. By mid-April, trackers counted over 150,000 tech jobs eliminated in 2026 alone.

AI has been cited as a factor in roughly 27,600 of those cuts - about 13% of total job cut plans, up from 5% in 2025. But the headline number undersells the story. Both things are true simultaneously: AI is already replacing certain roles directly, and companies are using that narrative to fund a much larger infrastructure bet. Google, Amazon, Microsoft, and Meta collectively plan to spend $725 billion on AI infrastructure in 2026 - up 77% from last year. Human salaries are the only cost flexible enough to be cut fast enough to partially offset that build-out. The result is a transfer: headcount budgets becoming AI budgets, with the infrastructure those budgets fund eventually doing more of the work the headcount used to do.

One detail worth noting: Oracle employees reported being asked to document their workflows to train AI systems before being laid off. That sequence - capture the knowledge, cut the role - is the clearest illustration of how the transfer actually works in practice.

Why It Matters

The roles being eliminated are not random. Customer support, recruiting, HR, marketing ops, campaign reporting, and revenue operations are absorbing disproportionate cuts across every company in this wave. These are not coincidentally the same functions where agent deployment is most mature and most documented.

The pattern is legible: companies are cutting where agents can already do the work reliably, and investing the savings into infrastructure that will expand that capability further.

For marketers, this is not an abstract labor market story. It is a signal about where the technology is, what it can currently do reliably, and which functions are next in line as capability improves.

What This Means

Vendor support is getting thinner. When the platforms you depend on are cutting customer support headcount and replacing it with agents, the quality and responsiveness of human help you get when something breaks is changing. That has direct operational implications for marketing teams managing complex stacks.

The roles closest to agent capability are the most exposed. Customer support, marketing ops, campaign reporting, content QA, lead enrichment - these are not hypothetical future targets. They are where agent deployment is most mature right now, and the layoff data confirms it. If these functions exist in your org in their current form, the question is not whether they will be affected but when and how.

The governance conversation is coming whether marketing is ready or not. Leadership is making headcount decisions informed by AI capability claims. Marketing teams that haven’t formed a clear view on where agents can and can’t replace human judgment will have that decision made for them by someone who has.

Your own role is not exempt. If your primary value is executing tasks that agents can now do reliably - pulling reports, briefing campaigns, managing workflows, scheduling and coordinating - that is worth confronting honestly. The marketers who will be most valuable in the next two years are not the ones who do the most work. They are the ones who direct AI to do the most work while adding judgment, strategy, and accountability that agents cannot replicate.

What To Do

Audit which functions in your marketing operation map most closely to what agents can currently do reliably. Campaign reporting, content QA, competitive monitoring, lead enrichment, and briefing are strong candidates. This is not an exercise in identifying what to eliminate, it is an exercise in understanding where your team’s time is going and whether that time is being spent on work that compounds or work that agents are already doing elsewhere.

Get ahead of the governance conversation internally. Form a point of view on where AI augments your team versus where it replaces specific functions, and bring that perspective to leadership before they develop one without you. The teams that will fare best in this environment are the ones that proactively redesign their workflows rather than defending existing ones.

Factor vendor support degradation into your stack decisions. If a platform is cutting significant support headcount, that changes the risk calculus around depending on it for mission-critical workflows. Ask your vendors directly how AI is changing their support model before you expand your reliance on their tools.

Assess your own role with the same honesty you would apply to your team. Where do you add value that AI cannot replicate - strategic judgment, client relationships, creative direction, accountability for outcomes? Where are you spending time on execution that agents are already doing reliably elsewhere? The marketers who make themselves demonstrably AI-powered are the ones who make that question easy to answer.

Ignore This If

AI agent deployment is not a factor in your industry and your organization has no plans to evaluate it in the near term.

Sources

Invezz - Is Big Tech’s $725B AI Splurge Being Funded by Mass Layoffs? (May 4, 2026)

Tech Insider - Tech Layoffs 2026: How AI Is Driving the Biggest Workforce Reduction (April 2026)

TIME - Oracle Workers Say They Were Fired After Training AI to Replace Them (April 30, 2026)

The Hill - AI is tied to tech layoffs, but spending — not job replacement — may be the key driver (April 2026)

Newsweek - All the tech giants announcing sweeping layoffs in 2026 (April 2026)

If You Want Help With This

If this story landed close to home, this is exactly the problem AI-Powered Marketing Department is built for.

It helps marketers build practical, repeatable AI workflows so the work that used to define your role gets done faster… and the time that frees up goes toward the strategic, judgment-driven work that agents cannot do.

The marketers who build this now won’t be explaining their value later.

Learn more about AI-Powered Marketing Department here.

Or start with a free preview here.

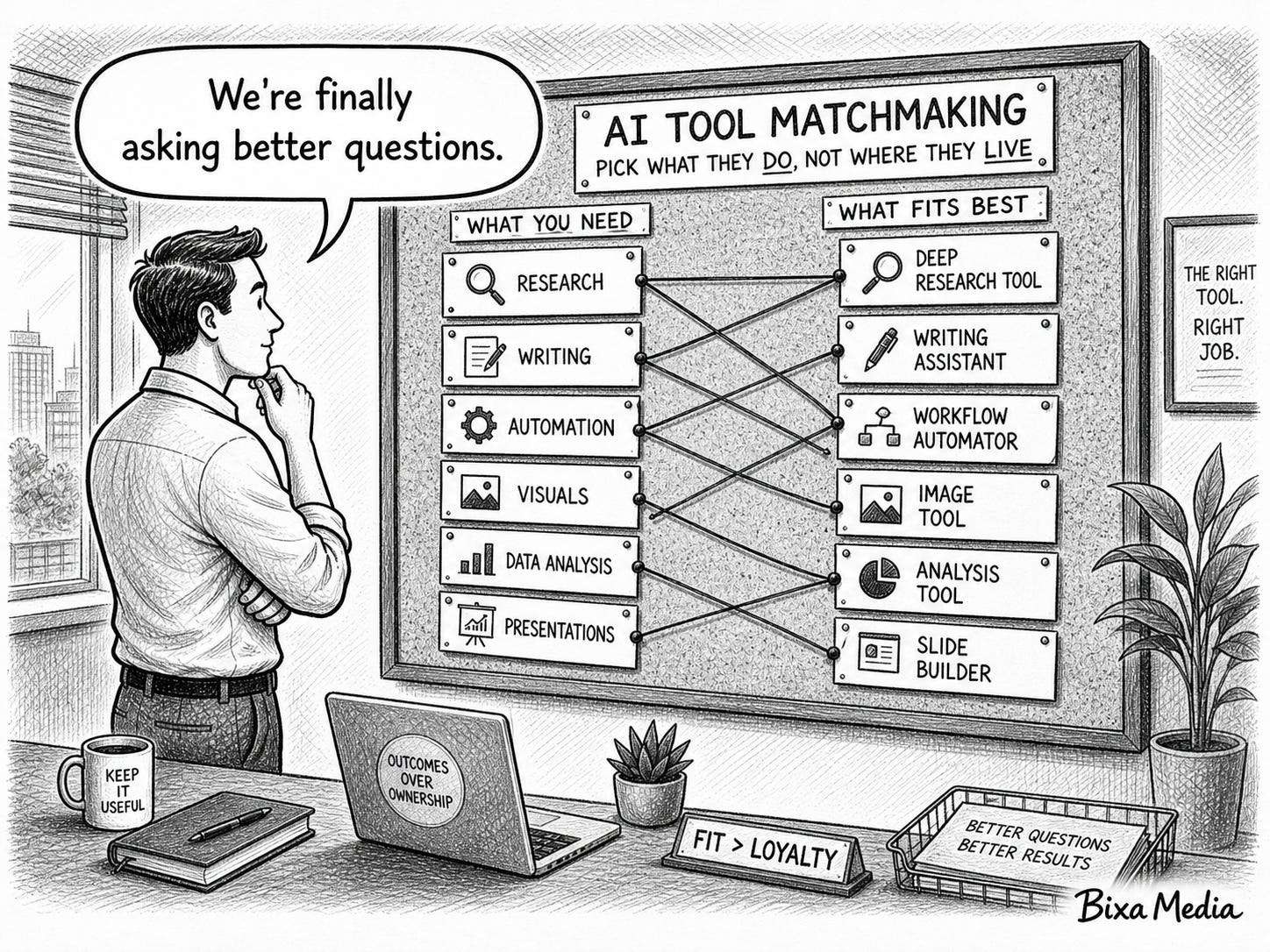

You Can Now Pick AI Tools Based on What They Do, Not Where They Live.

What’s Changed

On April 27, 2026, Microsoft and OpenAI restructured their partnership in a way that changes how enterprises access AI tools. For nearly three years, OpenAI’s models were exclusively available through Microsoft Azure - meaning if your organization ran on AWS or Google Cloud, accessing OpenAI’s tools required working around your existing infrastructure or switching providers. That exclusivity is now gone.

OpenAI models, including GPT-5.5, are now available natively on Amazon Web Services through AWS Bedrock. Google Cloud access is expected to follow later in 2026. Microsoft remains OpenAI’s primary cloud partner and retains a non-exclusive license to OpenAI’s technology through 2032, but the lock-in that defined enterprise AI procurement since 2022 is over.

OpenAI’s own revenue chief framed the shift directly in an internal memo: the Microsoft exclusivity had “limited our ability to meet enterprises where they are.” The inbound demand from enterprises wanting OpenAI tools on AWS was, in her words, “frankly staggering.”

Why It Matters

For two years, the AI vendor map was shaped by exclusive relationships that had nothing to do with which tools were best for your team. If your organization was AWS-native, OpenAI tools came with friction. If your IT and procurement teams were committed to a particular cloud, that commitment quietly determined which AI capabilities were available to your marketing stack.

That constraint shaped tool decisions that are still in place at most organizations today. Teams evaluated, selected, and passed on AI tools based on infrastructure compatibility as much as actual capability. The map those decisions were made against no longer exists.

What This Means

Tool selection can now be based on fit, not on inherited infrastructure. The cloud your organization runs on no longer determines which frontier AI models are available to you. If OpenAI tools were off the table because of AWS or Google Cloud constraints, that conversation is worth reopening with a different starting point.

Vendor lock-in is a smaller risk in AI tool decisions than it was six months ago. The exclusive era that made choosing an AI tool feel like choosing a cloud provider is ending. OpenAI models are available on AWS today and Google Cloud later this year. Anthropic models are available across multiple clouds. The market is moving toward model portability, which changes the risk calculus around committing to any single platform.

The competition among AI platforms is shifting. When model access was tied to cloud relationships, the question was which vendor your org was aligned with. Now that the same models are available across providers, the competition moves to platform quality - which provider offers the best performance, pricing, governance, and integration for the work your team actually does. That is a better question for marketing teams to be asking.

What To Do

Revisit any AI tool decisions that were made or avoided based on cloud compatibility. If OpenAI tools were evaluated and deprioritized because of infrastructure constraints rather than capability concerns, that evaluation is worth reopening now that those constraints have changed.

If your team is in the early stages of building or expanding an AI stack, use this moment to establish evaluation criteria that are cloud-agnostic. Assess tools on what they do, how well they integrate with your workflows, and what governance they support… not on which cloud partnership they were born from.

If you manage vendor relationships or are involved in procurement decisions, flag this shift to the stakeholders who made earlier tool decisions based on cloud alignment. The constraints they were working around may no longer apply.

Ignore This If

Your AI tool decisions have never been shaped by cloud infrastructure constraints and you evaluate tools purely on capability.

Sources

CNBC - OpenAI brings models to AWS after ending exclusivity with Microsoft (April 28, 2026)

TechCrunch - OpenAI ends Microsoft legal peril over its $50B Amazon deal (April 27, 2026)

GeekWire - OpenAI’s models land on Amazon Bedrock, one day after Microsoft exclusivity ends (April 28, 2026)

Windows News - OpenAI breaks cloud exclusivity: Microsoft and AWS reshape enterprise AI leverage (April 2026)

👀 Keep An Eye On

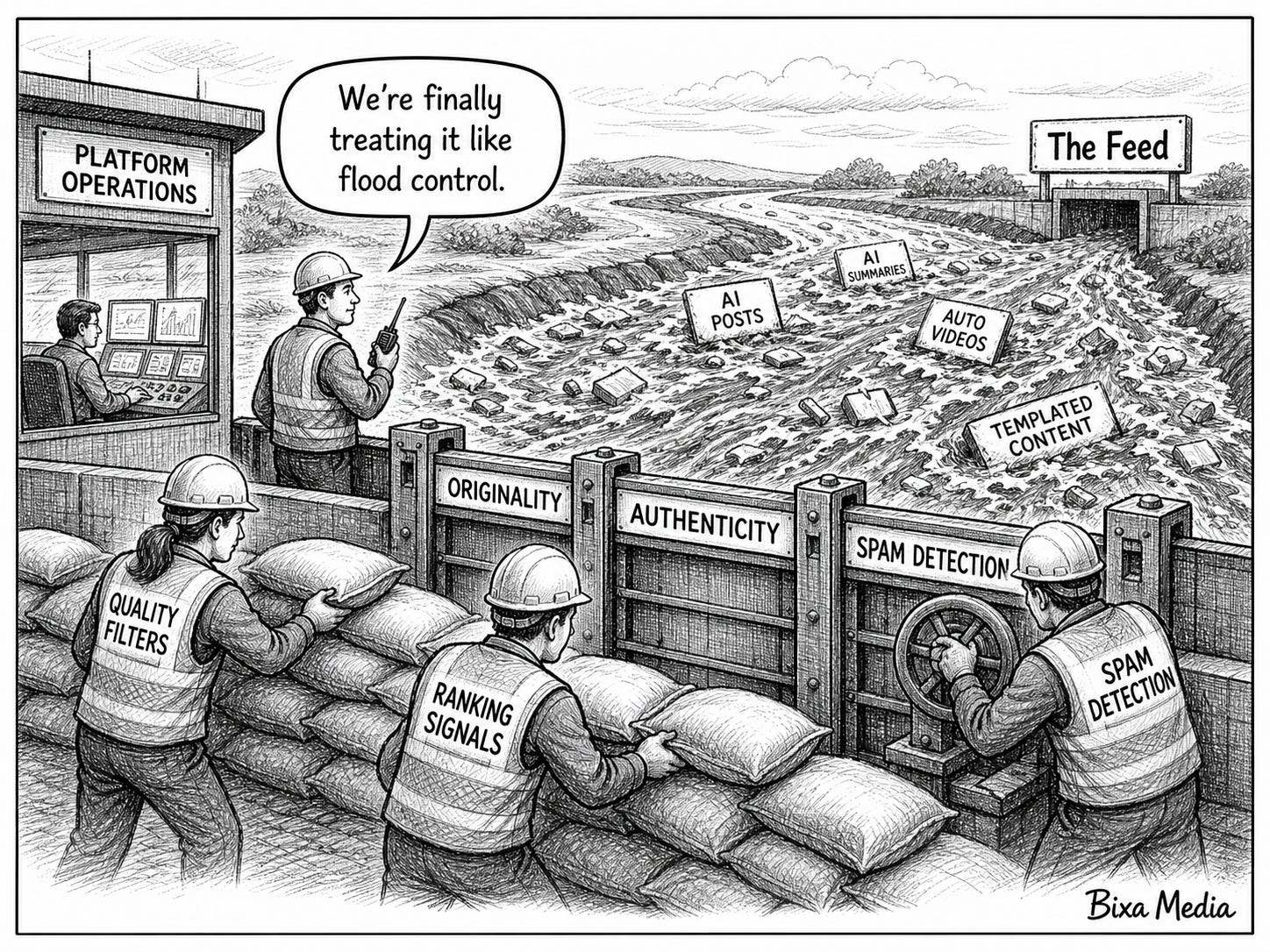

AI Content Is Flooding the Feed. Platforms Are Starting to Push Back.

What’s Changed

Music streaming platforms are the first content distribution channel to hit an AI saturation problem at scale, and the numbers make the issue concrete. Deezer reports that 44% of daily uploads to its platform are now AI-generated tracks. Suno, one of the largest AI music generators, produces seven million tracks per day. Sony Music has requested the removal of more than 135,000 AI-generated songs impersonating its artists.

The volume is enormous. The engagement is not. AI-generated tracks account for less than 3% of total streams on Deezer, and the majority of those streams have been identified as fraudulent - driven by bots rather than human listeners. Platforms are responding. Spotify launched Artist Profile Protection, giving artists the ability to review releases before they go live. Deezer implemented AI detection tools to track and label synthetic content and demonetizes those streams.

Consumer sentiment is moving in the same direction. According to Luminate’s Generative AI in Entertainment 2026 report, overall listener comfort with AI music dropped from -13% to -20% between May and November 2025. Across the board, consumers are more likely to feel uncomfortable with AI-generated music than comfortable with it.

Why It Matters

Music is the leading indicator. The pattern playing out on streaming platforms - massive AI content volume, negligible human engagement, platform policy responses, declining consumer trust - will play out on every content distribution channel as AI generation tools become cheaper and more accessible.

Warner Music Group framed the dynamic precisely in a recent filing: in a world of near-infinite content, what becomes scarce is trust. That is not a music industry observation. It is a content marketing observation. When any feed can be flooded with AI-generated content at effectively zero marginal cost, the channels and creators that audiences trust become more valuable, not less.

What This Means

Volume is no longer a content advantage. AI has made content production effectively free and infinitely scalable. The platforms dealing with this first - music streaming - are showing that volume without authenticity doesn’t drive engagement. That dynamic will reach every content channel.

Platform policy will shape what content competes against yours. The decisions Spotify, Deezer, and Apple Music are making right now about how to handle AI content - labeling, demonetization, distribution limits - will likely be replicated by social platforms and content networks. Those decisions will determine what your content has to compete against and how platforms weigh authenticity signals in their algorithms.

Audience trust is becoming a content asset. In a saturated feed, the brands and creators audiences already trust get more attention, not less. That makes trust-building a strategic priority, not just a brand value.

What To Do

Watch how streaming platforms structure their policy responses to AI content saturation - labeling requirements, demonetization rules, distribution limits - because those decisions will inform how social platforms, content networks, and search engines handle the same problem.

If your content strategy has been focused primarily on volume, more posts, more articles, more output, this is the moment to audit whether that approach is building trust or contributing to the noise your audience is already tuning out.

Ignore This If

Your content strategy is already focused on depth, specificity, and audience trust rather than volume, and you have no concerns about AI-generated content affecting your distribution channels.

Sources

NPR - AI music is flooding streaming platforms. But listeners like it less and less (May 2, 2026)

TIME - AI Slop Is Flooding Streaming — and Musicians Are Fighting Back (March 26, 2026)

Luminate - Generative AI in Music, Film & TV 2026 (2026)

Warner Music Group - FY2026 Form 8-K (2026)

The Bottom Line

A map doesn’t stop working all at once. It becomes unreliable gradually… a road that moved, a landmark that disappeared, a route that no longer takes you where it used to. You don’t know it’s wrong until you’re somewhere you didn’t expect to be.

That’s where a lot of marketing teams are right now. The assumptions underlying how they allocate budget, evaluate tools, staff their teams, and create content were accurate enough twelve months ago. They’re less accurate today. And the gap between the map and the territory is widening faster than most teams are updating their assumptions.

The advantage in this environment doesn’t go to the teams that saw everything coming. It goes to the teams that update fastest when the ground shifts, and that treat each new data point not as a headline to bookmark but as a reason to ask whether their current approach still makes sense.

Which of these changes is already showing up in how your team operates? And which ones are you still treating as someone else's problem? I'd love to hear in the comments.

If you want more issues like this, subscribe to Marketing Seeds and share this newsletter with a colleague or team member who's still navigating with last year's map.